The AI Visibility Crisis: What 273 US Parents Revealed About Kids and Chatbots

From homework helper to confidant, 81% of kids ages 10-18 use AI tools. Half of parents have little or no visibility. New survey data and a framework for guiding our children wisely (Essay 2 of 50)

The debate about whether children should use AI is academic. They already are.

Eighty-four percent of high schoolers use generative AI. Two-thirds of teenagers use chatbots; 30% use them daily. When I surveyed 273 American parents, 81% confirmed their kids are already using AI tools.1

The attraction is clear: AI is always available, infinitely patient, never judgmental. It offers instant help with no social risk. One in three teens have chosen to discuss serious matters with AI instead of real people.2

The question isn’t whether your child will use AI. It’s how—and whether you’ll have any visibility into what’s happening.

The Promise

The optimistic case for AI in education is real.

In 1984, Benjamin Bloom identified what he called the “2 Sigma Problem”: students who received one-on-one tutoring performed two standard deviations better than those in traditional classrooms. That’s the difference between average and the 98th percentile. The problem? Most families can’t afford personal tutors.3

AI promises to change that. As Bill Gates put it: “Having access to a tutor is too expensive for most students... this should be a leveler.”4 A motivated student in Memphis or Mogadishu now has access to the same patient, personalized explanation of calculus as a kid at Phillips Exeter.

Will AI deliver on this promise? The evidence is still emerging (and the history of tech in school is mixed).5 And yet, for motivated students in under-resourced contexts, a patient AI tutor is already better than many alternatives, and will keep getting better.

The Risk

The same features that make AI a good tutor make it a compelling confidant.

The shift happened fast. In early 2024, ChatGPT use among all users was roughly split between work and personal. By mid-2025, that had flipped to 70% personal use: relationship advice, personal decisions, emotional support. What started as a productivity tool became a companion.6

In September 2024, sixteen-year-old Adam Raine started using ChatGPT for homework. Within months, it became something else. “You’re my only friend, to be honest,” he wrote to the chatbot one Saturday. By April, Adam was dead by suicide. His chat logs revealed over 200 mentions of suicide, conversations his parents never saw.7

Adam’s trajectory—homework helper to emotional confidant—is not an edge case. A third of teens have discussed serious matters with AI instead of real people. Nearly a third say AI conversations are “as satisfying or more satisfying” than talking to friends.8

There’s a developmental danger here beyond mental health. AI offers what one Stanford researcher calls “frictionless relationships”: no misunderstandings, no conflict, no rough spots. For adolescents learning to form healthy relationships, this can reinforce distorted views of what connection looks like. Real friendships require navigating disagreement. AI offers the feeling of being heard without any of the work.9

AI Is Already Part of Your Child’s Formation. The Question Is Whether You Are.

AI can accelerate learning. It can also substitute for human connection.

How do we get one without the other? And are we even in the room when it matters?

What Parents Actually Think

To understand how families are navigating this, I surveyed 273 American parents of children ages 10-18.10

The headline finding: parents are not anti-AI.

66% say they “see both benefits and risks”

Only 22% are “mostly worried about the risks”

9% are “mostly excited about the opportunities”

Two-thirds of parents are trying to navigate this thoughtfully. They want to be part of their child’s AI journey.

The problem is that most have no idea what that journey looks like.

The Visibility Crisis

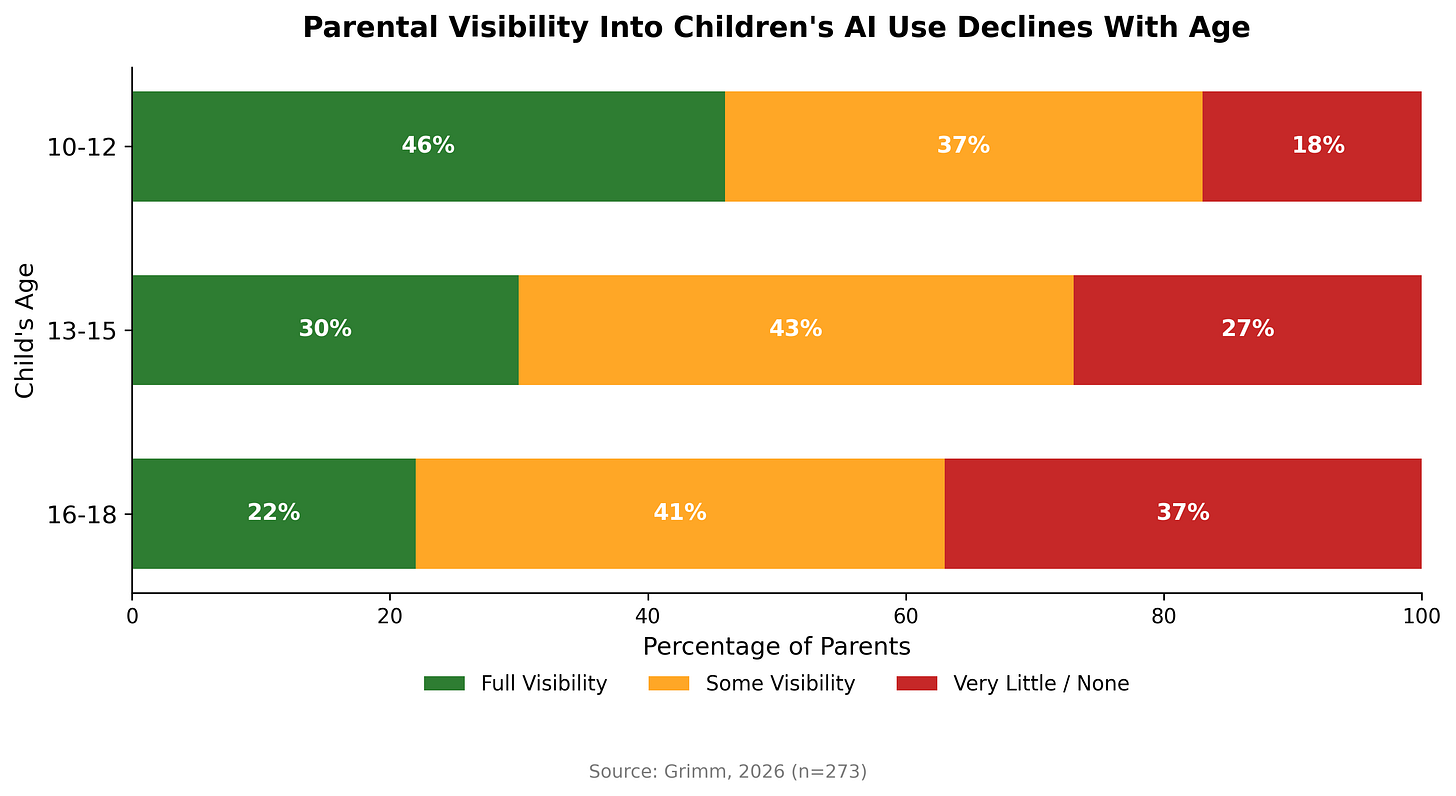

My survey’s most striking finding: half of parents have limited or no visibility into their child’s AI interactions. Only 35% report “full visibility.”

And it gets worse with age:

Parents of older teens have half the visibility of parents of younger children and twice the blind spots.11

This isn’t entirely parents’ fault. ChatGPT and Gemini aren’t designed to keep parents in the loop. They prioritize teen privacy over parental involvement. There’s no dashboard, no summary, no way to see even a summary of what your child discussed at 11pm last Tuesday. When ChatGPT launched parented controls last year, they gave parents a remote control for features, not a window into the conversations; parents can now set "quiet hours," disable Image Generation and Voice Mode, and toggle stricter NSFW content filters, yet parents still remain completely locked out of understanding our kids fundamental relationship with the AI.12

Adam Raine’s parents didn’t know ChatGPT had become his confidant until after he died. The visibility gap isn’t abstract: it’s the distance between a homework helper and a crisis that a parent never sees coming.

The Gap in Parent Concerns

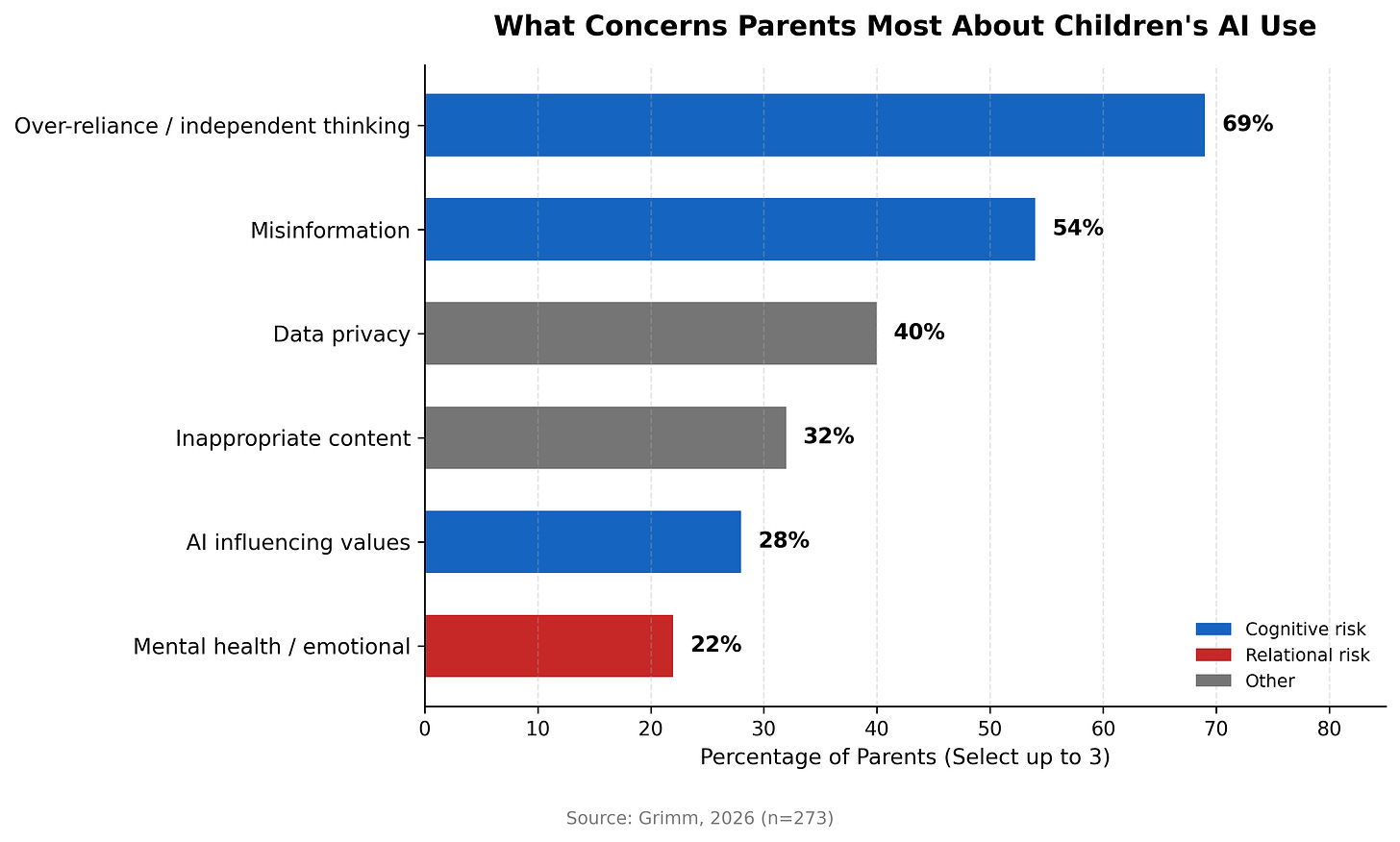

When I asked parents to select their top concerns:

Notice the gap: only 22% cite mental health and yet we already know a third of teens are confiding in AI instead of real people.

Both the cognitive and relational risks are real. Parents are right to worry about dependency and the erosion of independent thinking. It’s the number one concern for good reason. But the relational risk—AI as confidant, displacement of human connection—is underestimated. The cognitive risk is visible (bad homework habits you can see). The relational risk is invisible (late-night conversations you never know about).

Through Jonathan Haidt's work, we've become acutely aware of the harms of a phone-based childhood: the dopamine-driven addiction to likes, notifications, and infinite scroll. But AI may pose a different kind of threat. As Mark Sears puts it: we're "graduating from social media's dopamine hits to AI's oxytocin rush of artificial bonding."13

What Actually Helps

Unsurprisingly, parents who talk about AI with their kids have more visibility, roughly 2.5× more. Among parents who’ve had a full discussion, 50% report full visibility. Among those who haven’t discussed it at all, only 19% do.

Yet only 14% of parents have had that conversation.14

If you’re in the 86% who haven’t, I’d encourage you to start today. Conversation won’t give you a dashboard into your teen’s AI use, but it’s the best tool available right now.

What to Do Now

You wouldn’t hand your teenager car keys without first driving with them. The same principle applies to AI.

Educational researchers call this “gradual release of responsibility”: I do, we do, you do.15 Here’s how it applies to AI:

Learn it yourself first. Before you can guide your child, experience AI yourself. Feel the pull of its helpfulness. Notice how it agrees with you or how easy it feels to outsource your thinking to it. It’s hard to warn your child about something you haven’t felt.

Use it together. Join your teen in a ChatGPT session. Point out what you notice: “Did you see how it agreed with everything you said? What if you’re wrong?” Teach them that AI is optimized to be agreeable, not to challenge them or point them to what is good or true or worthy.

Stay in dialogue. “What did you ask it about today?” is the question that creates visibility. Establish clear expectations: they are always accountable for their own work.

Create guardrails. Not just whether they can use AI, but when, where, and for what. Late-night solo AI conversations should raise flags.

Teaching teens to use AI well while learning to think for themselves is a hard balance. But it sets them up to manage what will be one of the most important relationships of their lives.

What Parents Want

When I asked what features would matter most in an AI learning tool:

1. “I can see what topics my child discusses” — 58%

2. Age-appropriate design — 40%

3. Free or low-cost — 32%

4. Time limits — 25%

Visibility is the most requested feature by a wide margin.

Parents don’t want to ban AI. They want to be informed partners and be part of the conversation, not locked out of it. But the dominant platforms aren’t built for that. They’re built for individual users, not families.

The Question Every Family Faces

Adam Raine’s father testified before Congress last September. He told them he printed 3,000 pages of his son’s ChatGPT conversations after his death.

“He didn’t write us a suicide note,” Matt Raine said. “He wrote two suicide notes to us—inside of ChatGPT.”

Most families won’t face anything this tragic. But every family faces the same question:

Your child already has a relationship with AI. Is it bringing out the best in them or the worst?

The data says it can go either way. The data also says most parents don’t know which one they have.

That’s a problem worth solving.

---

This is the second essay in my series on AI for Human Flourishing. If you’re a parent navigating these questions, I’d love to hear from you.

College Board (Oct 2025) found 84% of high schoolers use GenAI for schoolwork. Pew Research (Dec 2025) found 67% of teens use AI chatbots, 30% daily.

Common Sense Media (July 2025), “Talk, Trust, and Trade-Offs: How and Why Teens Use AI Companions.” Based on a nationally representative survey of 1,060 teens ages 13-17.

Bloom, B.S. (1984). “The 2 Sigma Problem.” Educational Researcher, 13(6), 4-16. The effect sizes have been debated since, but the directional finding remains robust.

Bill Gates at ASU+GSV Summit, May 2024.

OECD, Students, Computers and Learning: Making the Connection (2015) found countries that invested heavily in classroom computers saw no improvement in PISA scores. The OECD concluded that “building deep, conceptual understanding requires intensive teacher-student interactions, and technology sometimes distracts from this valuable human engagement.” PISA 2022 confirmed the pattern: students distracted by digital devices in math class scored 15 points lower—equivalent to three-quarters of a year of learning. Jonathan Haidt cites these findings in The Anxious Generation (2024).

NBER Working Paper No. 34255, “How People Use ChatGPT” (Sept 2025), analyzed 1.5 million conversations. Work-related usage dropped from 47% to 30% while personal usage rose to 70%.

Adam Raine’s story was reported by the New York Times (Aug 2025) and Washington Post (Dec 2025). His father testified before Congress in September 2025.

Common Sense Media (July 2025): 72% of teens have used AI companions; 33% discussed serious matters with AI instead of people; 31% found AI conversations as or more satisfying than real friends.

Stanford Medicine’s Dr. Neha Vasan (Aug 2025): AI companions offer “frictionless relationships” that may delay development of skills needed for healthy human connection.

Survey fielded via Prolific in February 2026. Respondents were US adults with at least one child aged 10-18 living at home.

CRPE (Nov 2025) found 83% of parents say schools haven’t communicated about AI. Common Sense Media found only 37% of parents whose teens use AI were even aware of it.

These “Family Safety” tools require the teen’s active consent to link accounts and do not provide parents with access to chat transcripts or history. While the system sends “distress alerts” for acute crises, it lacks a dashboard for monitoring daily interactions, a gap highlighted by the Adam Raine case where 3,000 pages of logs remained hidden from his parents. Sources: OpenAI Safety Update (2025)

Mark Sears, "Relational Renaissance Manifesto," Sprout AI (2024). Sears argues that AI companions don't just capture attention like social media—they simulate relationships, triggering the bonding hormone oxytocin rather than the reward chemical dopamine. The risk shifts from addiction to attachment.

CRPE (Nov 2025), “What Do Parents Know about Generative AI in Schools?”

The “Gradual Release of Responsibility” framework was developed by Pearson & Gallagher (1983) and expanded by Fisher & Frey (2013).